Practical AI Fluency: Learning Through Building

How we're fixing the 2.1% module completion rate with personalized, practical learning paths

Practical AI Fluency: Learning Through Building

The Problem

What We Observed

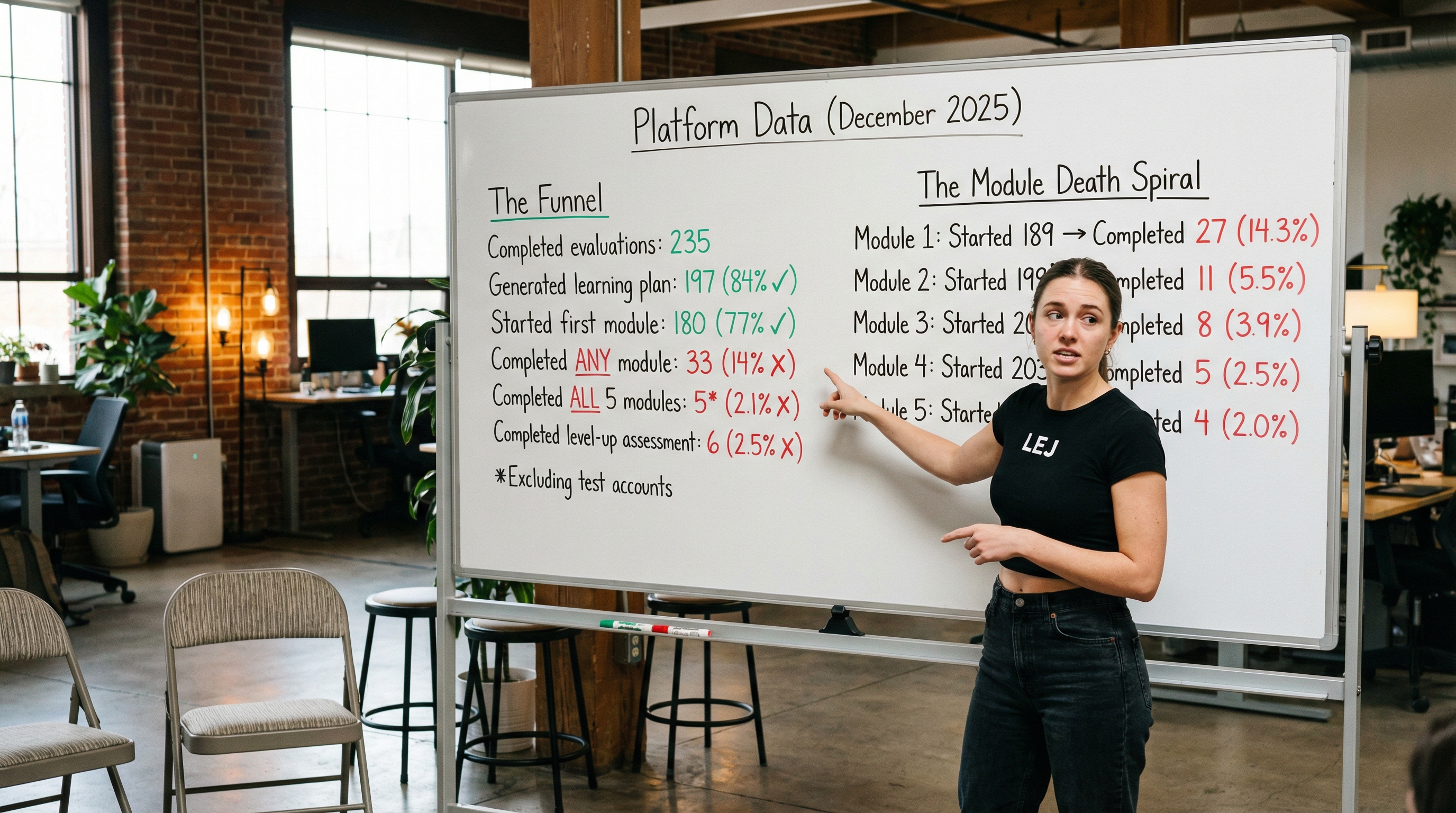

Our production data reveals a paradox: users are genuinely interested in learning, but they don't follow through. Of 235 users who completed evaluations, 84% generated a learning plan and 77% started their first module. Interest is high. But only 14% completed even a single module, and just 2.1% (5 real users) finished all five. The pattern is clear in the module-by-module data: completion rates drop from 14% in Module 1 to 2% by Module 5. Users are clicking through—exploring, browsing—but not learning. They open the plan with good intentions, and then life wins. "AI fluency fundamentals" can't compete with everything else demanding their attention because there's no immediate payoff, no tangible outcome, no answer to "why should I care about this today?"

What Research Confirms

This isn't unique to our platform—it's a universal pattern in online education. MOOC research shows completion rates below 13%, with dropout rates exceeding 90% across platforms. A Duke University course lost a quarter of its students before the first lecture even began. The reasons are consistent: lack of immediate relevance, no clear connection to personal goals, and the inability to see how abstract knowledge applies to real life. Meanwhile, research on learning transfer shows that less than 20% of knowledge acquired in training is actually applied in workplaces. The gap between theory and practice is where learning goes to die. But the research also points to a solution: problem-based learning dramatically improves motivation, retention, and critical thinking. Studies suggest learners retain roughly 10% of what they read but up to 90% of what they learn through doing. Purpose-driven learners—those with clear, practical goals—show significantly better self-regulation and long-term retention. The evidence is clear: people don't learn tools, they learn outcomes.

Platform Data (Production Database - December 2025)

The Funnel

| Stage | Users | Conversion |

|---|---|---|

| Completed evaluations | 235 | — |

| Generated learning plan | 197 | 84% ✓ |

| Started first module | 180 | 77% ✓ |

| Completed ANY module | 33 | 14% ✗ |

| Completed ALL 5 modules | 5* | 2.1% ✗ |

| Completed level-up assessment | 6 | 2.5% ✗ |

*Excluding test accounts

The Module Death Spiral

| Module | Started | Completed | Completion Rate |

|---|---|---|---|

| Module 1 | 189 | 27 | 14.3% |

| Module 2 | 199 | 11 | 5.5% |

| Module 3 | 204 | 8 | 3.9% |

| Module 4 | 203 | 5 | 2.5% |

| Module 5 | 205 | 4 | 2.0% |

The problem isn't awareness or interest. It's sustained engagement without purpose.

The Insight

During evaluations, users naturally reveal everything we need:

- Their industry (film production, marketing, healthcare)

- Their tools (Airtable, DaVinci Resolve, Notion)

- Their pain points (client communication, inventory chaos, repetitive tasks)

- Their projects (building a CRM, managing a team, launching a product)

We're sitting on a goldmine of context. We just haven't been using it.

The Solution

Same destination, different vehicle. AI fluency delivered through personal relevance.

The research points to a clear set of strategies that dramatically improve learning completion:

| Strategy | Evidence | Application |

|---|---|---|

| Choice & Autonomy | "Mere choice" increases interest—even trivial choices boost engagement. Autonomy is one of three innate psychological needs (Self-Determination Theory). | Users pick from personalized options, not assigned a path |

| Personalization | 8-12 point academic improvements, 15% dropout reduction. Low-interest learners benefit most from personalized interventions. | Options derived from user's own context (industry, tools, pain points) |

| Quick Wins | Tangible results sustain motivation. Dopamine from early wins keeps learners engaged through harder material. | Practical outcomes visible within the first module |

| Mastery Goals | Mastery orientation (developing ability) sustains interest better than performance orientation (demonstrating ability). | Skill badges and projects built > leaderboard rank |

| Microlearning | 3-7 minute chunks match how brains retain information. Shorter modules reduce cognitive overload. | Focused 3-4 module paths vs. sprawling 5-module fundamentals |

What This Looks Like

After completing an evaluation, users don't see "Here's your AI fluency learning path." They see:

"Based on what you shared, here's how AI can help YOUR life:"

- AI-Powered Gear Inventory — Track your production equipment with smart categorization

- Client Update Automator — Generate professional status emails in your voice

- Shot List Generator — Turn scripts into organized shot lists

- Invoice Follow-up Assistant — Polite payment reminders that don't feel robotic

Or tell us what YOU want to learn...

They pick one. We generate a focused 3-4 module path that teaches AI fluency through building something they actually want.

The fundamentals are still there—prompting, iteration, understanding AI limitations—but they're woven into a project that matters to them. They're not learning "how to prompt." They're learning "how to get this invoice assistant to sound like me."

This isn't abandoning AI fluency education. It's delivering it through a vehicle that actually reaches the destination.

The User Journey

EVALUATION (1 credit)

↓

RESULTS + PERSONALIZED OPTIONS

"Your score is 5.8. Here's how AI helps YOUR life..."

↓

FIRST PRACTICAL PATH (free, included)

3-4 modules building something real

↓

ASSESSMENT

"You built X. In doing so, you demonstrated: [specific fluency gains]"

↓

NEW OPTIONS (informed by everything so far)

"Based on what you just learned, here's what you could tackle next..."

↓

NEXT PATH (1 credit)

↓

[REPEAT INFINITELY]

Each cycle:

- Adds context about the user

- Builds practical skills

- Earns badges in skill categories

- Generates smarter recommendations for next time

The Skill System

The unified 1-10 score becomes a richer skill profile:

| Skill Category | Level | Projects Built |

|---|---|---|

| AI Automation | 4 | Gear Inventory, Client Emailer |

| Data & Analysis | 2 | Project Cost Tracker |

| AI Writing | 3 | Update Automator, Proposal Drafter |

| Creative AI | 1 | Color Grade Matcher |

This matters more than a single number. It shows what you can actually do.

Why This Works

Agency: Users choose their path. They're not assigned homework.

Relevance: Options come from their world, not a generic curriculum.

Immediacy: "Invoice follow-up assistant" solves a problem they have right now.

Retention: Purpose-driven learning sticks. Theory-first learning fades.

Freshness: AI knowledge gets stale fast (thinking models are 1 year old, agents are 6 months old). But "I built an inventory system" stays valuable even as AI evolves. The project anchors the learning.

Achievable: 3-4 modules with a clear outcome vs. 5 modules of "fundamentals."

The Business Model

- Initial evaluation: 1 credit (includes first practical path free)

- Additional paths: 1 credit each

- Assessments: Built into the path flow

Users aren't paying to "learn AI fluency." They're paying to build things that make their lives easier. The value prop is tangible.

Teams (Future)

Teams inject their own context:

"We're a marketing agency. We use HubSpot and Figma. Priority: content creation and automation."

Individual employees see options filtered through company priorities:

- AI Headline Generator for HubSpot campaigns

- Figma-to-copy workflow

- Social media scheduler with AI suggestions

Managers see skill distribution across the team, gaps to address, and progress over time.

The Bigger Picture

This mechanic is domain-agnostic:

Evaluation → Extract Context → Generate Personalized Options → User Selects → Generate Targeted Path

It works for AI fluency. It works for photography. Cooking. Business skills. Mathematics. Journalism. Any domain where people want practical outcomes, not abstract education.

The One-Liner

Don't teach people about AI. Show them what AI can build for their life—then teach them while they're building it.

Open Questions

- How do we extract structured context from evaluation transcripts? → SOLVED: Stopgap implemented

- What's the taxonomy of skill categories?

- How do assessments work for practical paths?

- How does gamification/leaderboard evolve?

- What's the minimum viable version to test? → DONE: Stopgap deployed, testing live

- How do we migrate existing users?

Stopgap Implementation (Deployed December 2025)

While the full "Learning Revolution" requires architectural changes, we've deployed a stopgap that delivers immediate value: richer context extraction during evaluations and practical-first learning plans.

What Changed

| Component | Before | After |

|---|---|---|

| Section 1 turns | 4 turns | 6 turns |

| Section 1 focus | Generic AI tool inventory | Work context, projects, pain points, AI wishlist |

| Section 1 score | Variable 1-10 (never actually used) | Hardcoded 10/10 (everyone gets full marks for showing up) |

| Learning plan focus | "Close skill gaps" | "Build something useful WHILE closing skill gaps" |

| Module output | "Template or example" | "Working tool they'll use tomorrow" |

Files Modified

supabase/functions/evaluation-chat/index.ts # SECTION_TURN_COUNTS[1]: 4 → 6

supabase/functions/evaluation-monitoring/index.ts # Same

supabase/migrations/20251219163446_update_section1_turn_count.sql # SQL function

Prompts Updated (via Prompt Manager UI)

1. Section 1 Evaluation Prompt (base_fluency_section_1)

This prompt transforms Section 1 from a generic "what AI tools do you use?" conversation into a deep context-gathering session. Key changes:

- Extended to 6 turns (up from 4) to gather richer context

- Pivots from tools to work context on Turn 3: "Let's step back from AI for a moment. Tell me about what you actually do..."

- Explicitly captures structured data: industry/domain, role, tools (AI and non-AI), current projects, workflows, pain points, and AI wishlist

- Identifies a "Practical Opportunity": One specific, actionable thing AI could help them build that would make an immediate difference

- Not scored: Everyone gets 10/10 for this section—it's about understanding them, not evaluating them

The wrap-up prompt at the end of Section 1 outputs a structured summary with labeled fields that feed directly into the learning plan generator.

2. Learning Plan Generator Prompt (generate_learning_plan)

This prompt takes the rich context from Section 1 and generates a personalized first module. Key principles:

- Practical-first: The module focus is written as what they'll HAVE, not what they'll LEARN ("A reusable client update template" not "Understanding prompt iteration")

- Context extraction: Explicitly looks for the Section 1 "PRACTICAL OPPORTUNITY" field as the foundation for Module 1

- Industry-specific examples: A film producer gets a client update email template; a marketer gets a headline generator; a developer gets a code review checklist

- Time-boxed: 30-45 minutes, max 3 phases, completable in one sitting

- Velocity-aware: LOW velocity users get simple single-purpose tools; HIGH velocity users get sophisticated multi-step systems

- Forward-looking: Generates

module_2_contextthat explains how the next module should build on and extend the deliverable from Module 1

The output includes user_context (extracted from evaluation), target_score, the module itself with focus/exercise/successcriteria, a creative `module1_name`, and context for generating Module 2.

Results

Tested December 2025. The new prompts produce dramatically better outputs:

- Section 1 captures specific industry, role, tools, and pain points

- Learning plans feel personally tailored instead of generic

- Module 1 focuses on building something the user actually wants

Status

Phase: Stopgap deployed and validated. Full "Learning Revolution" requires architectural changes.

Stopgap Status: ✅ Live in production

Last Updated: December 2025